By Mike Chen | Lead Reviewer

Former SaaS PM testing AI tools against real business scenarios

The AI chatbot wars are heating up. OpenAI’s ChatGPT dominated 2023, but now it faces serious competition from Anthropic’s Claude and Google’s Gemini. Each promises to be smarter, faster, and more useful than the others.

But which one should you actually use?

We’ve spent weeks testing all three AI assistants across dozens of real-world tasks to give you a definitive answer.

The Contenders

🏆 ChatGPT (OpenAI) – ATR Score: 8.8/10

- Models: GPT-4 Turbo, GPT-4, GPT-3.5

- Pricing: Free (GPT-3.5), Plus $20/month (GPT-4), Pro $200/month (unlimited GPT-4)

- Strengths: Versatility, extensive plugin ecosystem, web browsing, image generation

🥈 Claude (Anthropic) – ATR Score: 8.65/10

- Models: Claude 3 Opus, Claude 3 Sonnet, Claude 3 Haiku

- Pricing: Free (limited), Pro $20/month

- Strengths: Long context windows, thoughtful responses, coding, safety

🥉 Gemini (Google) – ATR Score: 8.35/10

- Models: Gemini Ultra, Gemini Pro, Gemini Nano

- Pricing: Free (Gemini Pro), Advanced $20/month (Gemini Ultra, Google One AI Premium)

- Strengths: Google integration, real-time information, multimodal capabilities

Round 1: Writing Quality

We asked each AI to write a 500-word blog post introduction about remote work.

ChatGPT: Produced engaging, conversational content with good flow. Natural tone but sometimes overly enthusiastic.

Claude: More thoughtful and nuanced. Slightly formal but exceptionally well-structured. Best for professional content.

Gemini: Solid but generic. Felt more like committee-written content. Accurate but less personality.

Winner: Claude for professional writing, ChatGPT for casual/creative content

By Mike Chen | Lead Reviewer

Former SaaS PM testing AI tools against real business scenarios

Round 2: Coding Assistance

We asked each AI to debug a Python script and explain the issue.

ChatGPT: Quickly identified the bug and provided a clear fix. Good explanations but occasionally over-explained simple concepts.

Claude: Excellent at understanding context. Provided not just a fix but an explanation of why the bug occurred and how to prevent similar issues.

Gemini: Correct solution but less detailed explanation. Good for quick fixes, less educational.

Winner: Claude – Best explanations and teaching approach

Round 3: Research and Fact-Checking

We asked each AI: “What are the key findings from the latest IPCC climate report?”

ChatGPT: With browsing enabled, pulled recent information accurately. Good synthesis of sources.

Claude: Acknowledged its training cutoff and couldn’t access real-time info. However, provided excellent context from its training data.

Gemini: Leveraged Google’s search capabilities to provide the most up-to-date information with source citations.

Winner: Gemini – Best for current events and research

Round 4: Complex Reasoning

We gave each AI a multi-step logic puzzle involving scheduling conflicts.

ChatGPT: Solved it correctly but showed its work step-by-step, making it easy to follow.

Claude: Not only solved it but identified potential edge cases we hadn’t considered.

Gemini: Solved it correctly but with less detailed reasoning.

Winner: Claude – Best at complex reasoning and edge case detection

By Mike Chen | Lead Reviewer

Former SaaS PM testing AI tools against real business scenarios

Round 5: Creative Tasks

We asked each AI to write a short sci-fi story (200 words) about AI consciousness.

ChatGPT: Most creative and engaging. Natural storytelling with good pacing.

Claude: More philosophical and thought-provoking. Slower paced but deeper themes.

Gemini: Competent but predictable. Safe storytelling without risks.

Winner: ChatGPT – Most creative and engaging

Round 6: Long Context Understanding

We uploaded a 50-page PDF and asked specific questions about details scattered throughout.

ChatGPT: Handled it well but occasionally lost track of context in longer documents.

Claude: Exceptional. 200k token context window meant it could hold the entire document in memory and reference it accurately.

Gemini: Good performance but not as consistent as Claude for very long documents.

Winner: Claude – Superior long context handling

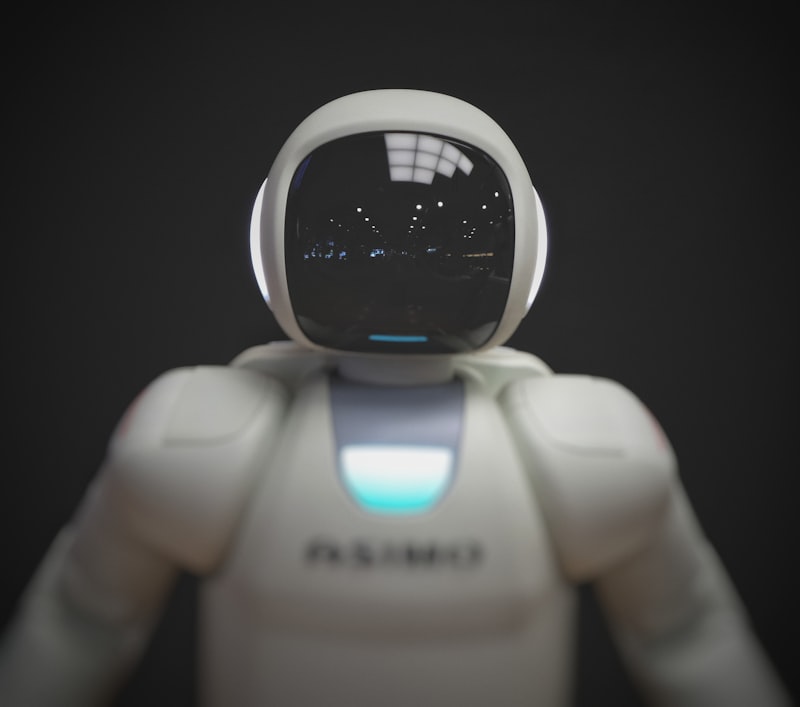

Round 7: Image Understanding

We uploaded images and asked each AI to analyze them.

ChatGPT: Good basic description and analysis. Best for creative interpretation.

Claude: Detailed and accurate descriptions. Excellent at reading text in images.

Gemini: Strongest multimodal capabilities. Could understand complex visual relationships.

Winner: Gemini – Best overall image understanding

Round 8: Practical Tasks

We asked each AI to help plan a 7-day trip to Japan.

ChatGPT: Comprehensive itinerary with lots of creative suggestions. Well-organized.

Claude: Thoughtful itinerary considering pacing, rest days, and practical logistics.

Gemini: Leveraged Google Maps and real-time data for practical routing and timing.

Winner: Gemini for practicality, ChatGPT for creativity

By Mike Chen | Lead Reviewer

Former SaaS PM testing AI tools against real business scenarios

ATR Score Breakdown

We evaluated each chatbot using our ATR Score™ framework across four weighted categories: Output Quality (40%), User Experience (25%), Value for Money (20%), and Features (15%).

Learn more about how we calculate ATR Scores →

Which One Should You Choose?

Choose ChatGPT if you:

- Want the most versatile all-rounder

- Need image generation (DALL-E 3 included)

- Value creative writing and brainstorming

- Want extensive plugins and integrations

- Are already invested in the OpenAI ecosystem

Choose Claude if you:

- Write long-form content or code

- Need exceptional reasoning abilities

- Value thoughtful, nuanced responses

- Work with large documents or codebases

- Prefer safety and accuracy over speed

Choose Gemini if you:

- Need real-time, up-to-date information

- Use Google Workspace extensively

- Want practical, actionable advice

- Need strong multimodal capabilities

- Prefer integrated experience with Google services

Can You Use More Than One?

Absolutely! Many power users subscribe to multiple AIs and use them for different tasks:

- ChatGPT for creative work and general questions

- Claude for coding and analysis

- Gemini for research and Google integration

Most offer free tiers, so you can try them all before committing.

The Bottom Line

There’s no clear winner—it depends on your use case.

For most people: ChatGPT Plus ($20/month) remains the best all-around value. It’s versatile, creative, and constantly improving.

For writers and developers: Claude Pro ($20/month) edges ahead with superior reasoning and long-context handling.

For researchers and Google users: Gemini Advanced (included with Google One AI Premium $20/month) offers the best real-time information and ecosystem integration.

Frequently Asked Questions

Is ChatGPT better than Claude?

It depends on what you’re writing. ChatGPT Plus is more versatile and creative, making it better for brainstorming and varied content. Claude Pro is superior for long-form, analytical, and professional writing where accuracy matters. Our testing gave ChatGPT an ATR Score of 8.8/10 vs Claude’s 8.65/10, but Claude wins on coding and reasoning tasks.

Which AI chatbot is best for coding?

Claude Pro is the best AI chatbot for coding. In our tests, it provided the most detailed explanations, identified edge cases other AIs missed, and excelled at debugging complex code. Its 200k token context window also means it can handle entire codebases without losing track.

Can I use these AI chatbots for free?

Yes! All three offer free tiers:

- ChatGPT: Free with GPT-3.5 (unlimited)

- Claude: Free with usage limits

- Gemini: Free with Gemini Pro

The free versions are solid for casual use, but paid tiers ($20/month) unlock faster models, priority access, and additional features.

Which AI chatbot is best for research and current events?

Gemini Advanced is best for research and current events. It leverages Google’s search capabilities to provide real-time information with source citations. ChatGPT Plus can browse the web but isn’t as integrated. Claude can’t access real-time info at all.

Are these AI chatbots safe to use for work?

Yes, but with precautions:

- Don’t share confidential data – Assume all inputs are logged

- Verify outputs – All three can hallucinate facts

- Use paid tiers for business – Better data privacy policies

- Claude is most cautious – Best for sensitive topics

Always fact-check important information and follow your company’s AI usage policies.

Ready to Choose Your AI Assistant?

Start with the free versions and see which fits your workflow: